29.04.2026

At the annual RC Trust Retreat, early-career researchers explored how exchange, interdisciplinarity, and real-world perspectives strengthen trustworthy AI.

Photo: Ankur Bhatt

Photo: Ankur Bhatt

How does responsible AI research of tomorrow emerge? It grows where different perspectives meet: technical, social, ethical, medical, legal, and human-centered.

This idea shaped this year’s RC Trust Retreat. Centered around the Graduate School, the retreat brought PhD researchers, postdocs, principal investigators, and guests together for three days of exchange, reflection, and exploration. Rather than simply presenting finished results, the program created space to ask broader questions: What does trustworthy AI need from research? Where do technical models meet real-world consequences? And how can early-career researchers learn to navigate this complexity?

A Graduate School built on exchange

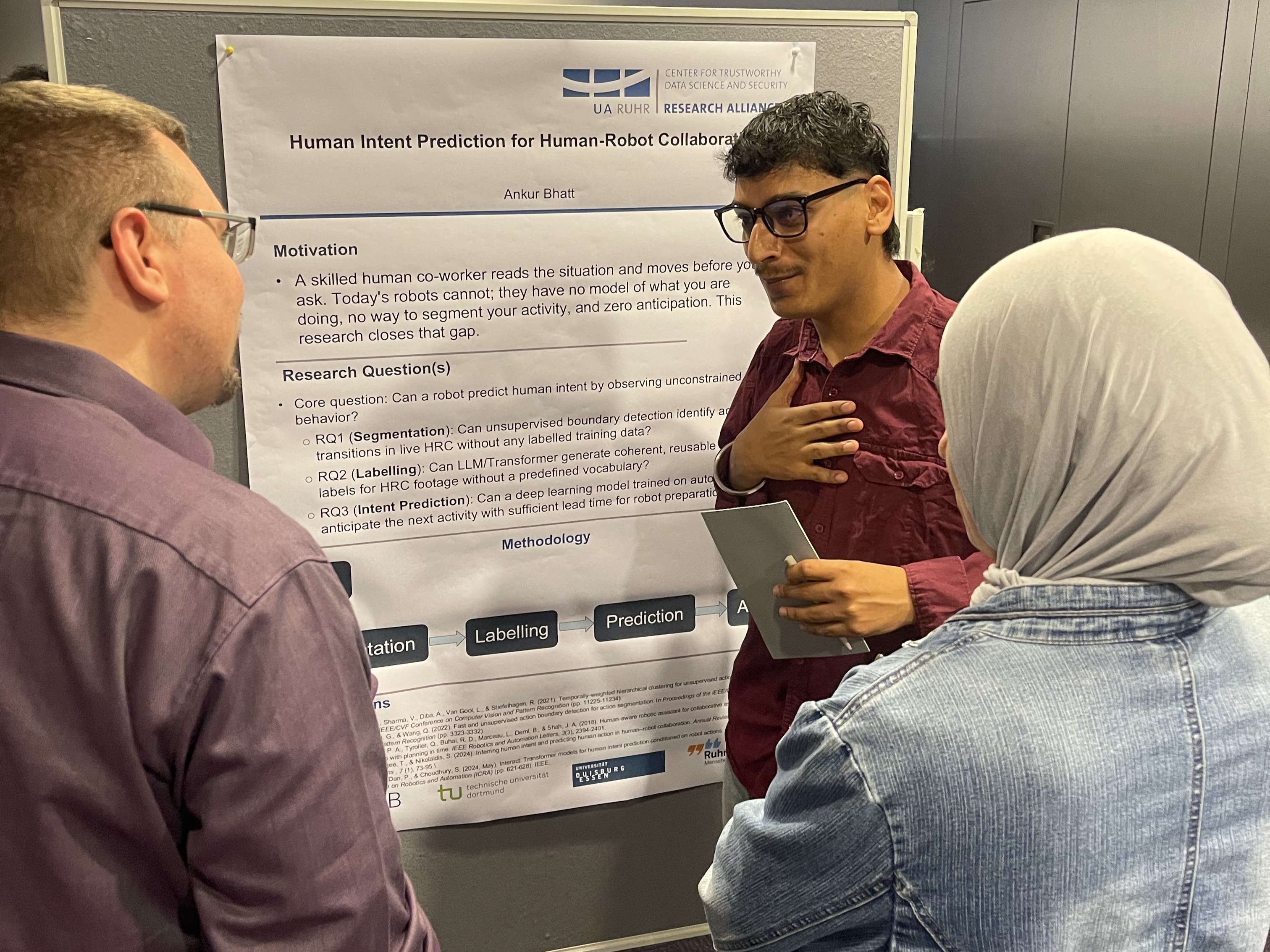

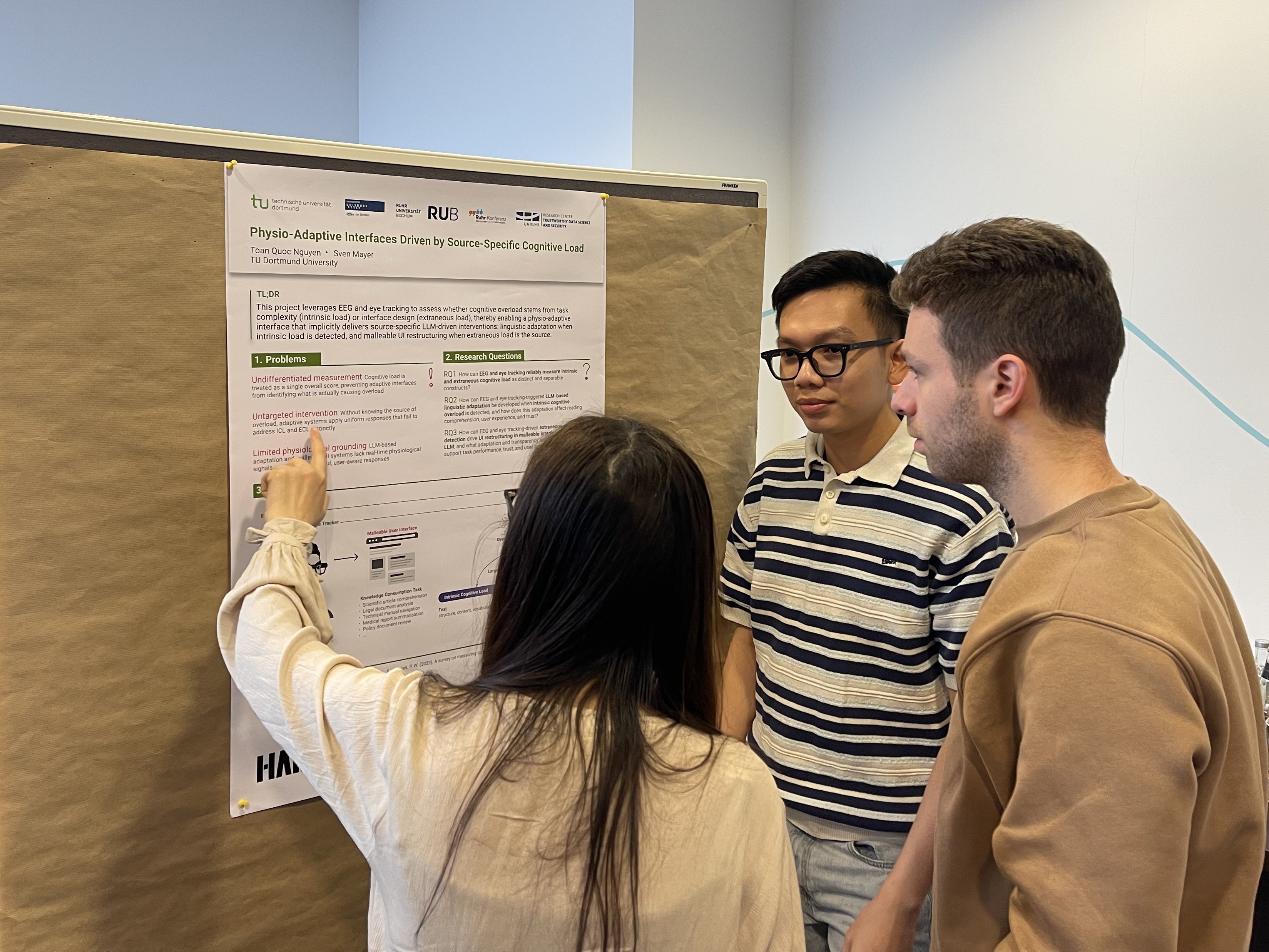

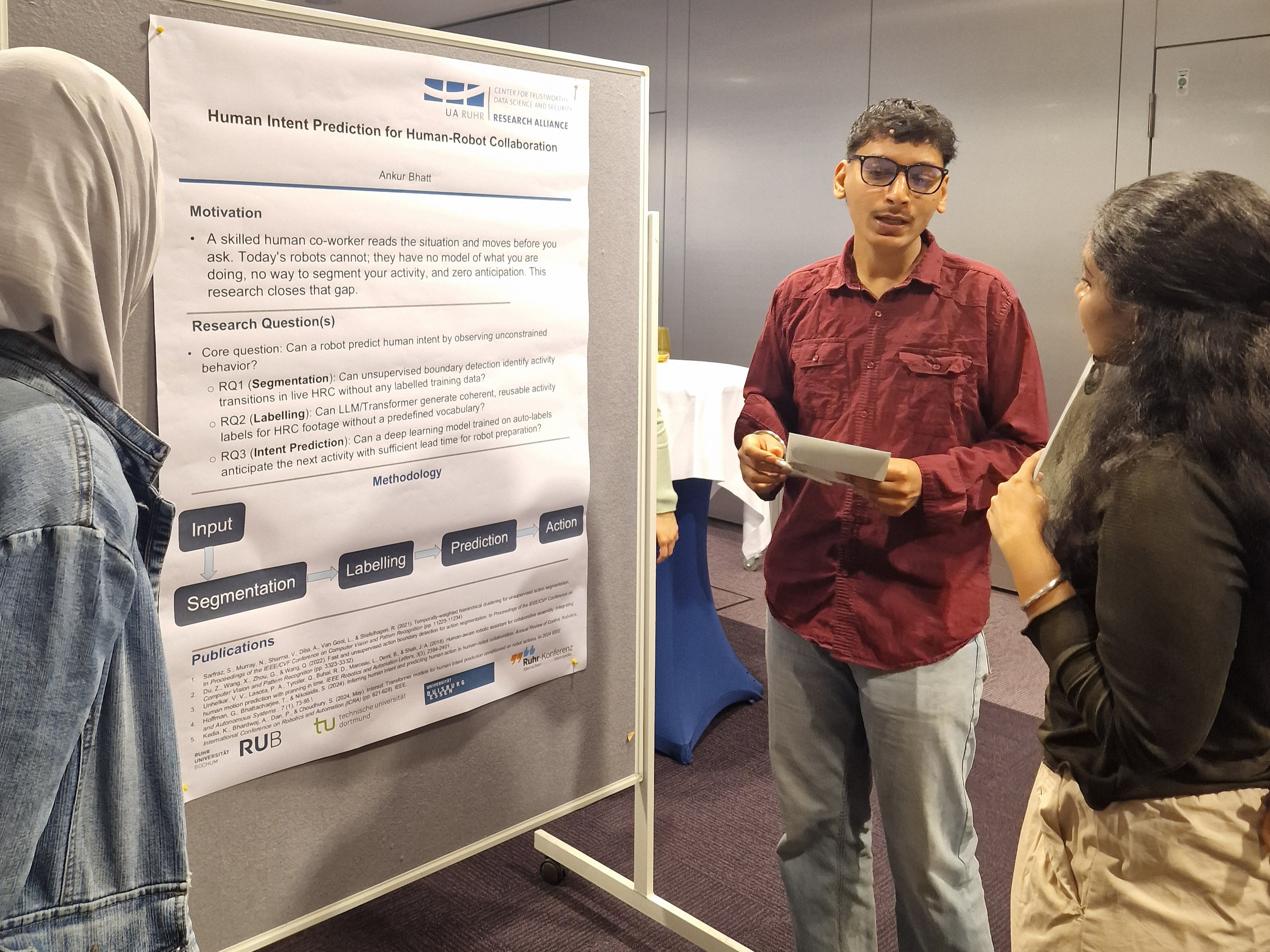

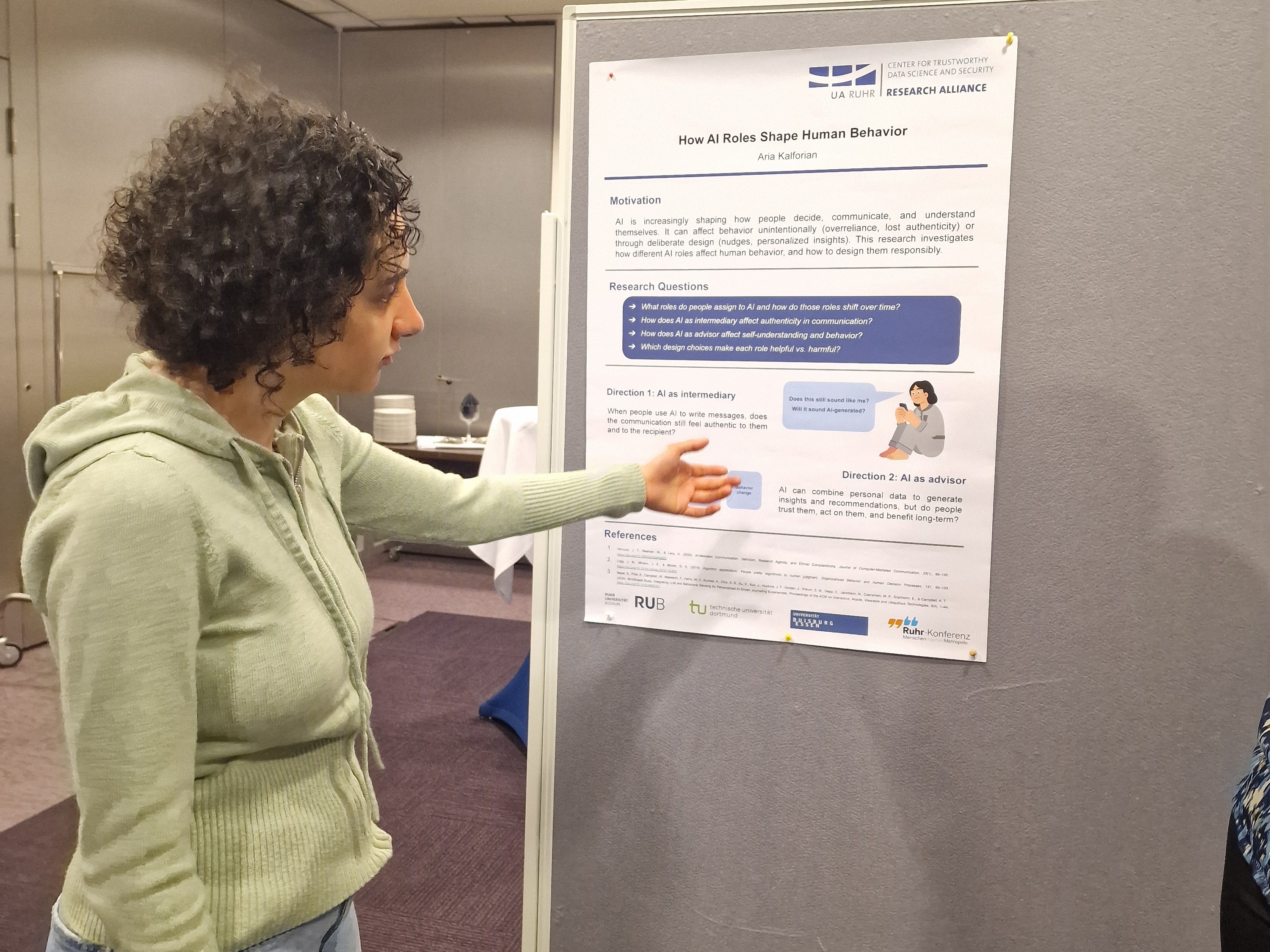

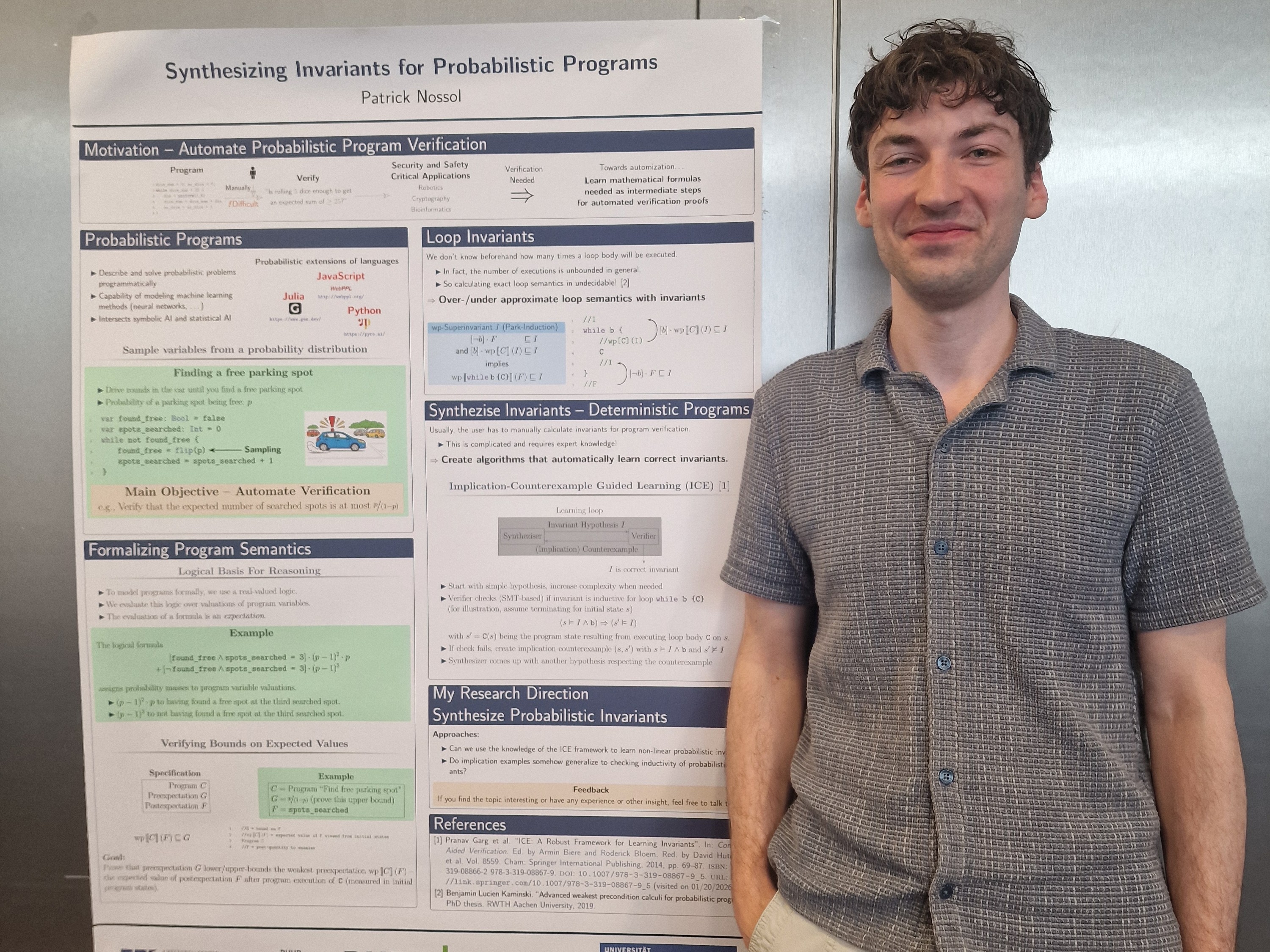

A central aim of the retreat was to strengthen the Graduate School as a place of academic growth and interdisciplinary learning. Poster sessions offered researchers the opportunity to present their work, receive feedback, and discover connections across projects and disciplines.

The format also made visible what defines the RC Trust: expertise from different fields does not run in parallel, but enters into conversation. Questions from machine learning, human-computer interaction, medicine, psychology, statistics, law, and society were brought together in a shared space.

This matters because trustworthy AI is not only a technical challenge. It also depends on how systems are used, interpreted, governed, and trusted.

Learning from places where AI meets reality

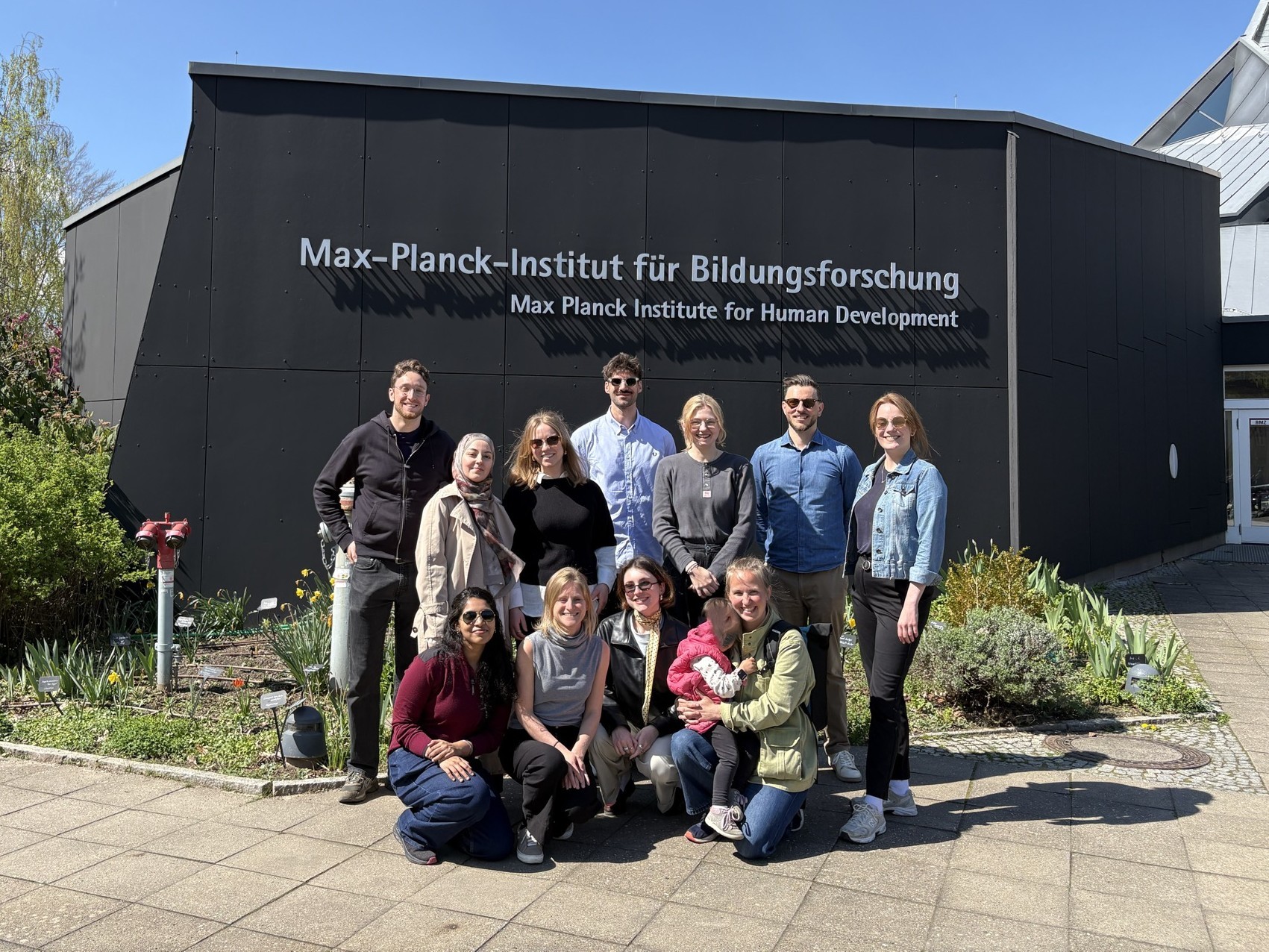

The retreat also included four excursions that opened windows into different areas of research and application. Visits to the Max Planck Institute for Human Development, the Hasso Plattner Institute’s Human-Computer Interaction and Machine Learning groups, and Charité offered valuable insights into research environments closely connected to the RC Trust’s own questions.

For the center, these visits were more than external program points. They showed how AI research takes shape in different contexts: in fundamental research, in interaction between humans and technology, in machine learning, and in medicine, where data-driven systems can have direct consequences for people’s lives.

These encounters helped connect the Graduate School’s internal discussions with broader scientific and societal challenges. They also strengthened networks with institutions whose work touches central questions of trustworthy data science and security.

From risks to blind spots

Discussions and exchange played a central role throughout the retreat. To create space for open dialogue across disciplines and research perspectives, many of these conversations were structured in a Barcamp format – a participant-driven approach where topics emerge from the community itself.

In these Barcamp sessions, the retreat turned into an active thinking space. Participants discussed surprising behavior in deep learning, the gap between mathematical formalisms and real-world consequences, collaborations with industry, AI in medicine and health, and the distinction between risk and harm in AI systems.

One session explored how large language models might help surface missing assumptions or overlooked next steps. Another used an interactive format to discuss when an AI-related problem is still a risk and when it has already become harm. In addition to these sessions further discussions emerged organically during the retreat. These included topics such as equality and sexism in research, the role of language in shaping how we talk about AI, the perception of AI tools in research practice, safety and security in agentic AI, and questions around potential communicative decline through increased AI use. Other sessions explored virtual reality in research and data science approaches in environmetrics.

These topics reflect a core concern of the RC Trust: responsible AI requires more than performance. It requires awareness of limits, consequences, and contexts. It also requires calibrated trust – the ability to understand when trust in AI is justified, when skepticism is necessary, and how both can be grounded in evidence.

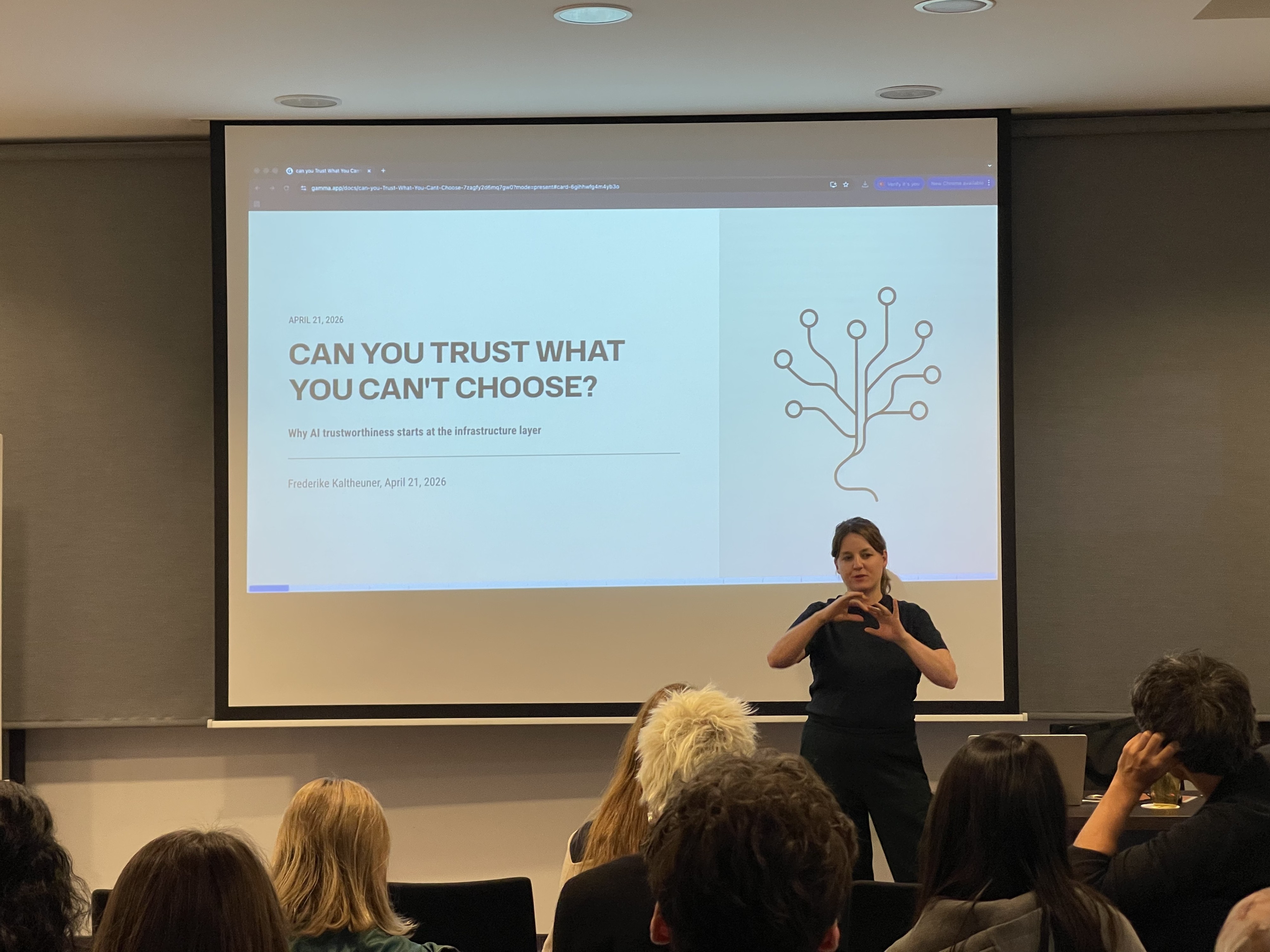

This perspective was further enriched by a guest talk from Frederike Kaltheuner, who addressed the broader societal and political dimensions of digital technologies. Her contribution added an external viewpoint on how questions of power, governance, and accountability shape the development and deployment of AI – and why technical excellence alone is not sufficient to ensure trustworthy systems.

Time to connect, question, and continue

The retreat deliberately balanced structured sessions with time for informal exchange. A guest talk, PhD and postdoc get-together, poster discussions, group activities, and open meeting slots created opportunities to continue conversations beyond formal presentations.

This balance was essential. Networks do not emerge only from scheduled talks. They grow in follow-up questions, shared walks, spontaneous discussions, and the realization that another discipline may hold part of the answer to one’s own research problem.

Shaping trustworthy AI together

The annual retreat showed how the RC Trust approaches its mission: by connecting people, disciplines, institutions, and perspectives. It supports early-career researchers not only in developing excellent research, but also in understanding the wider contexts in which AI systems operate.

In this sense, the retreat was not just a meeting of the center. It was a glimpse into how trustworthy AI research can be built: through openness, critical reflection, and collaboration across boundaries.

Category

- Network

- Event

Author

Patrick Wilking