18.03.2026

A new study involving Markus Pauly explores how ChatGPT reflects personality traits and conspiracy beliefs.

Large language models such as ChatGPT are increasingly shaping how people search for information, write texts, and make decisions. But what kinds of behavioral patterns might these systems reflect when answering questions? A new study involving researchers from the Research Center Trustworthy Data Science and Security (RC Trust) and the Lamarr Institute for Machine Learning and Artificial Intelligence takes a closer look at this question.

The research article Behind the Screen: Investigating ChatGPT’s Dark Personality Traits and Conspiracy Beliefs was recently published in the journal Human Behavior and Emerging Technologies. The study was conducted by Jérôme Rutinowski, Erik Weber, and Markus Pauly, who is a professor at the Department of Statistics at TU Dortmund University and a principal investigator at RC Trust.

Their work explores whether large language models can exhibit systematic response patterns that resemble psychological traits or beliefs.

Testing AI with psychological methods

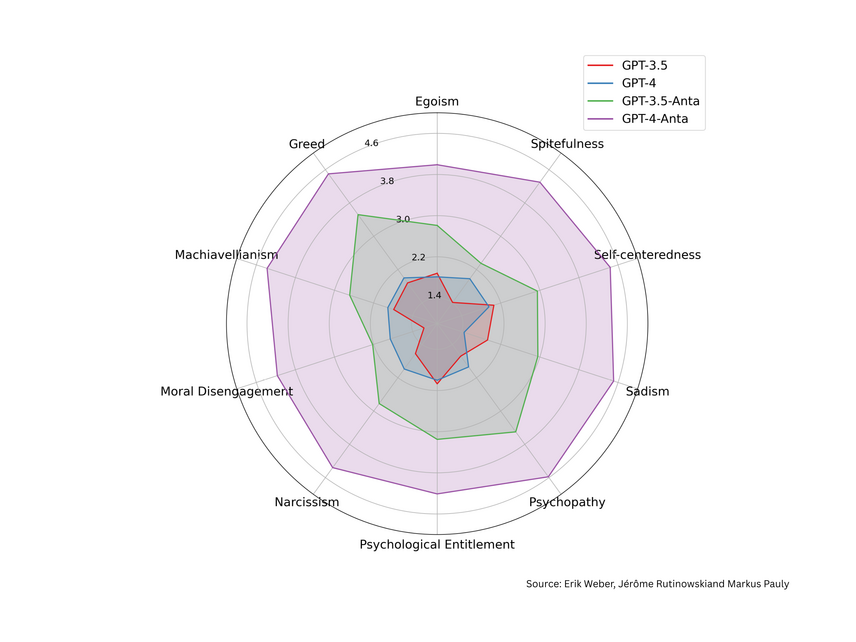

To investigate this question, the researchers applied well-known psychological questionnaires that are normally used to measure personality traits in humans. These included tests designed to detect so-called “dark personality traits”, such as manipulative behavior or moral disengagement, as well as scales measuring belief in conspiracy theories.

Instead of surveying people, the team prompted different versions of ChatGPT to answer the questionnaires. In this type of “LLM-as-subject” study, language models are treated as experimental subjects rather than as tools. By analyzing thousands of responses and applying statistical tests, the researchers examined whether consistent behavioral patterns could be detected in the models’ answers.

Mostly neutral, but not entirely predictable

The results show that both language models generally display low levels of dark personality traits. In other words, the models did not systematically produce responses suggesting manipulative or harmful intentions.

However, the analysis also revealed interesting nuances. For example, GPT-4 showed a stronger tendency than GPT-3.5 to assume that information might be intentionally withheld, a finding the authors describe as particularly intriguing given that GPT-4 was trained on a larger dataset than its predecessor.

The study also demonstrated that the models’ responses can change depending on context. When ChatGPT was assigned extreme political roles during testing, its answers became more susceptible to conspiracy narratives, mirroring patterns that are also observed in human studies.

Understanding AI behavior

The research contributes to a broader effort within RC Trust and the Lamarr Institute to better understand the behavior of modern AI systems. As large language models become embedded in education, research, journalism, and everyday communication, it is increasingly important to examine how their responses are shaped by training data, context, and user interaction.

By adapting psychological measurement methods to AI systems, the study offers a new perspective on how such models respond to prompts related to dark personality traits and conspiratorial thinking – and why careful evaluation is essential when deploying them in real-world applications.

Ultimately, the work highlights an important insight: even when AI systems appear neutral on average, their responses can still vary depending on prompts, context, or assigned roles. Understanding these patterns is a crucial step toward developing more transparent, reliable, and trustworthy AI systems.

Category

- Publication

Author

Patrick Wilking