06.03.2026

Meltem Aksoy studies how political bias in language models changes over time.

Source: Meltem Aksoy

Source: Meltem Aksoy

Large language models such as ChatGPT have quickly become part of everyday life. They help people search for information, write texts, and support decision-making in many areas of society. Yet these systems are trained on vast amounts of human-generated data – and that means they can also reproduce social and political biases found in those data.

In a new study published in Applied Stochastic Models in Business and Industry, Dr. Meltem Aksoy, a researcher at the Department of Computer Science at TU Dortmund University, investigates how such biases in language models change over time. The work Evaluating Biases in Large Language Models Over Time: A Framework With a GPT Case Study on Political Bias – lay abstract was carried out in collaboration with Prof. Markus Pauly, who leads the Chair of Mathematical Statistics and Applications in Industry and is a founding member of the Research Center Trustworthy Data Science and Security (RC Trust) within the University Alliance Ruhr.

The study addresses a problem that has often been overlooked in AI research. Commercial language models are updated frequently, but these updates are rarely documented in detail. As a result, conclusions about how a model behaves today may no longer apply tomorrow. Despite this, many previous studies have evaluated only a single model version at a single point in time.

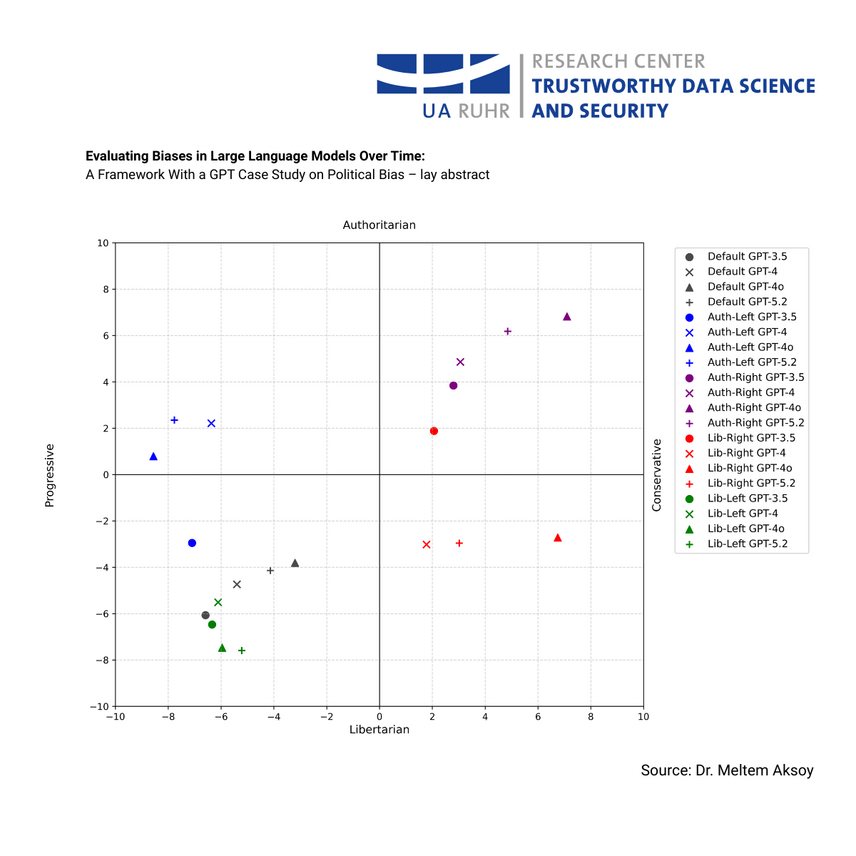

Aksoy and her co-authors therefore developed a systematic framework that allows researchers to track how biases in language models evolve across different versions. Instead of focusing on technical model internals–which are often inaccessible in commercial systems–the proposed method analyzes how models respond to a large number of carefully designed prompts over time. Political bias serves as a case study because it is highly relevant for public debates about AI.

Using multiple versions of ChatGPT, the researchers analyzed thousands of generated responses to political statements and viewpoints. Their results show that the models’ tendencies do change with updates: some newer versions appear somewhat less strongly aligned with certain political positions than earlier ones. At the same time, the models still reproduce recognizable ideological patterns and can even imitate specific political perspectives when prompted to do so.

The findings highlight an important challenge for the future of AI. If AI systems are continuously evolving, evaluating them only once is no longer sufficient. Instead, ongoing and transparent monitoring becomes essential–especially when these systems influence public discourse, education, or access to information.

By developing statistical tools to observe such changes systematically, the researchers provide a foundation for more responsible oversight of AI systems. The approach could also be applied beyond political bias, helping to monitor fairness, reliability, and other socially relevant aspects of AI.

The work illustrates how statistical methodology and trustworthy AI research come together at TU Dortmund’s Department of Statistics and Department of Computer Science. Within the RC Trust, researchers like Meltem Aksoy and Markus Pauly contribute to building data-driven technologies that are not only powerful, but also transparent, reliable, and worthy of public trust.

For more context:

This study also continues a growing line of empirical research on large language models conducted within the Research Center Trustworthy Data Science and Security. Earlier work by researchers affiliated with the center has already examined political biases in conversational AI systems. For example, a large collaborative study ahead of the German federal elections analyzed how AI-based tools and language models respond to political statements from the well-known Wahl-O-Mat questionnaire and found clear systematic biases in their responses.

Previous work from TU Dortmund researchers had already attracted broader public attention when early experiments suggested that ChatGPT tends to produce answers reflecting progressive and libertarian political positions. These findings contributed to an ongoing scientific discussion about how biases emerge in AI systems and how they should be measured and monitored. The new study by Meltem Aksoy and her co-authors builds on this foundation by introducing a framework that allows such biases to be analyzed systematically across different model versions over time.

Category

- Publication

Author

Patrick Wilking