28.04.2026

Award-winning papers and new insights into AI, interaction, and governance at a leading international conference.

The ACM CHI Conference on Human Factors in Computing Systems is one of the world’s leading venues for research on how people interact with technology. Each year, it brings together an international community to present cutting-edge work at the intersection of computing, society, and design.

At CHI 2026, researchers from the Research Center Trustworthy Data Science and Security (RC Trust) contributed a strong and diverse set of results – highlighting both technical innovation and societal relevance.

Governing AI in practice

The Compliant and Accountable Systems Research Group, led by Prof. Jat Singh, focuses on how emerging technologies can be designed and governed responsibly. Combining technical, legal, and societal perspectives, the group addresses a central challenge: how to make complex AI systems accountable in real-world contexts.

This approach was clearly reflected in their contributions to CHI 2026. With four accepted papers – among them a Best Paper Award and an Honorable Mention – the group achieved remarkable visibility.

The Best Paper “Who Controls the Conversation? User Perspectives on Generative AI (LLM) System Prompts” by Anna Neumann, Yulu Pi, and Jatinder Singh examines how users perceive and interact with system prompts in generative AI; a part that is often overlooked but a highly influential layer of control.

https://dl.acm.org/doi/10.1145/3772318.3791726

The Honorable Mention paper “’It’s Just a Wild, Wild West’: Harnessing Public Procurement as an AI Governance Mechanism” (Anna Ida Hudig, Emma Kallina, Jatinder Singh) explores how public procurement can shape the governance of AI systems in practice.

https://dl.acm.org/doi/10.1145/3772318.3791968

Further contributions extend this perspective. “Who Does What? Archetypes of Roles Assigned to LLMs During Human-AI Decision-Making” (Shreya Chappidi, Jatinder Singh, Andra Valentina Krauze) analyses the impact of different roles that AI can take in decision-making processes.

https://dl.acm.org/doi/10.1145/3772318.3791428

In “The Limits of Stakeholder Participation in Safety-Critical Contexts: Lessons from Air Traffic Control” (Emma Kallina, Constanze M. Leeb, Jatinder Singh), the team investigates potential limitations and other considerations of participatory development approaches.

https://dl.acm.org/doi/10.1145/3772318.3790959

Beyond papers, the group also contributed to community activities around emerging topics. This includes co-organizing the meet-up “Disability, Differences, and Diversity: Revisiting Inclusive Design and Access”, together with Jatinder Singh, Giulia Barbareschi, and an international team of researchers.

https://dl.acm.org/doi/10.1145/3772363.3778798

In the workshop “Participatory Data Governance in Practice” (Jovan Powar, Emma Marlene Kallina, Anna Ida Hudig, Jatinder Singh, Lin Kyi, Heleen Janssen, Genevieve Smith, Renwen Zhang), the discussion focused on how participatory approaches to data governance can be implemented in practice.

https://dl.acm.org/doi/10.1145/3772363.3778746

In the meet-up “How Could AI Supply Chain Research Shape HCI Inquiries And Vice-Versa?” (Inha Cha, David Gray Widder, Blair Attard-Frost, Jatinder Singh, Agathe Balayn), participants explored the intersection between AI supply chains and human-centred computing research. https://dl.acm.org/doi/10.1145/3772363.3778800

Designing interaction and perspective

The Human-AI Interaction group, led by Prof. Sven Mayer, approaches trustworthy AI from a different angle: how people experience, interpret, and engage with intelligent systems in practice.

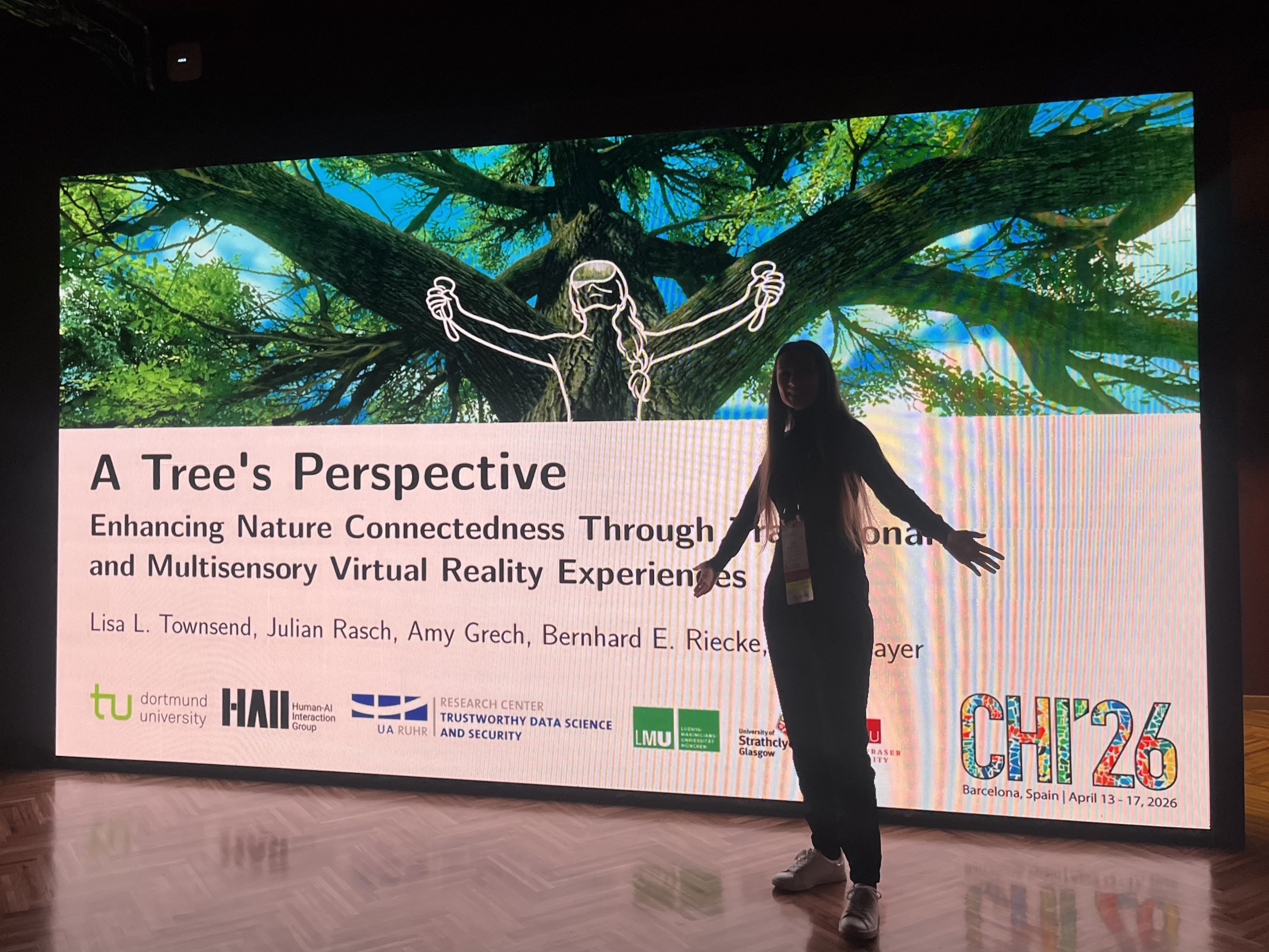

At CHI 2026, the group presented, “A Tree’s Perspective: Enhancing Nature Connectedness Through Transitional and Multisensory Virtual Reality Experiences” by Lisa Townsend Julian Rasch, Amy Grech, Bernhard Riecke, and Sven Mayer. The paper was recognized with an Honorable Mention Award.

https://dl.acm.org/doi/10.1145/3772318.3790282

The work explores how immersive, multisensory virtual reality can shift perception and foster a stronger sense of connection to the natural world. By placing users in unfamiliar perspectives, it opens up new directions for how interaction design can shape experience and reflection.

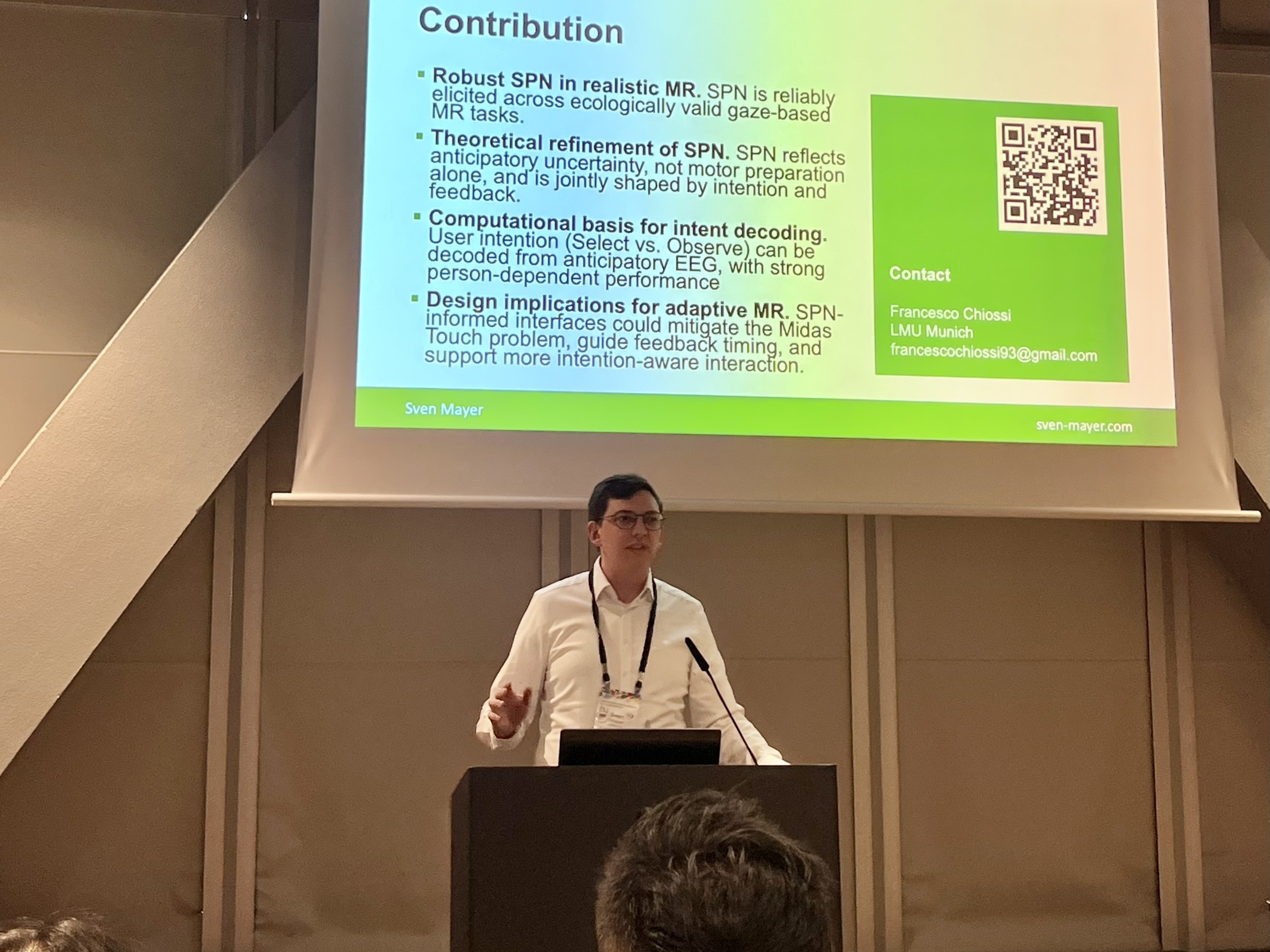

In an interdisciplinary effort in which the team collaborated with researchers from neurophysiology they contributed “Anticipation Before Action: EEG-Based Implicit Intent Detection for Adaptive Gaze Interaction in Mixed Reality”, forecasting the use of electroencephalogram (EEG) as an implicit marker for building intention-aware mixed reality interfaces that mitigate the Midas Touch problem.

https://dl.acm.org/doi/10.1145/3772318.3790523

With partners from Sydney, Australia the team also build on earlier work and presented “Understanding the Effects of Interaction on Emotional Experiences in VR.” An open source tool enabling others to effectively elicit emotions in virtual reality.

https://dl.acm.org/doi/10.1145/3772318.3790313

In the their forth full paper they presented “Balancing Accuracy and Embodiment: A Hybrid Perspective for Complex Visuomotor Tasks in VR.” In this work, the authors demonstrate the versatility of virtual reality in supporting users in balancing tasks by providing a new perspective that lets users see their arms and an out-of-body view, simplifying balancing.

https://dl.acm.org/doi/10.1145/3772318.3791472

Additionally, together with collaborators around the world, the group also organized a meet-Up to bring together people from around the world interested in physiological HCI. “PhysioCHI: Lessons Learned from Implementing Human-Centered Physiological Computing” by Kathrin Schnizer, Teodora Mitrevska, Benjamin Tag, Abdallah El Ali, and Sven Mayer.

https://dl.acm.org/doi/10.1145/3772363.3778794

In addition, PhD researchers from the group participated in several workshops and meet-ups, contributing to ongoing discussions across the CHI community. The different workshops discussed topics spanning across empathic computing, AI mediated communication, user interfaces concerning human-AI interactions, paper presentations with senior researchers and embodying human-human communication with tangible user interfaces.

Finally, Sven Mayer not only contributed to the conference scientifically but also led a community feedback session on “Upcoming changes to the CHI full paper peer review process.” A topic that is dear to his heart, not only as a Steering Committee member but also as he will oversee all publications as the next Technical Program Co-Chair at CHI’27.

More on HAII at CHI 2026 is available in the chair’s news section.

Inclusion and engagement in focus

The Chair of Inclusive Technology and Collective Engagement, led by Prof. Giulia Barbareschi, brings yet another important perspective to CHI 2026: how interactive systems can be designed to better account for diversity, accessibility, and collective experiences.

The paper “Animacy and the Eye of the Beholder: A Mixed-Methods Study on Cognitive Animacy with a Kinetic Origami Surface”, Charalampos Krekoukiotis, Giulia Barbareschi, Berend te Linde, Katsuki Higo, Ryogo Kawamata, Sotaro Shimada, Takatoshi Yoshida, and Kouta Minamizawa, which is part of the collaboration with the Keio School of Media Design in Japan, investigates how people perceive animacy in robots who purposefully do not resemble humans or animals. The study combines qualitative and quantitative methods to better understand how motion and materiality influence human perception and how we can design better robots with ambiguous qualities that leave more room for personal interpretation for interaction.

https://dl.acm.org/doi/10.1145/3772318.3791969

Beyond the paper, the group was actively involved in community formats. As mentioned earlier, Giulia Barbareschi co-organized the meet-up “Disability, Differences, and Diversity: Revisiting Inclusive Design and Access” together with Jatinder Singh and an international team of collaborators, fostering discussion on inclusive design and accessibility within HCI.

https://dl.acm.org/doi/10.1145/3772363.3778798

The workshop (Re-)Thinking Empathy's Materiality in HCI further explored how empathy can be understood and designed for in interactive systems. Here, the workshop organizers (including Giulia Barbareschi and Sven Mayer) brought together researchers across disciplines to map the existing research landscape, discuss risks and management strategies, as well as novel opportunities for innovations.

https://dl.acm.org/doi/10.1145/3772363.3778717

Perception matters

The Psychological Aspects of Human-Algorithm-Interaction Young Investigator Group adds another important dimension: how people perceive and evaluate AI systems in everyday contexts.

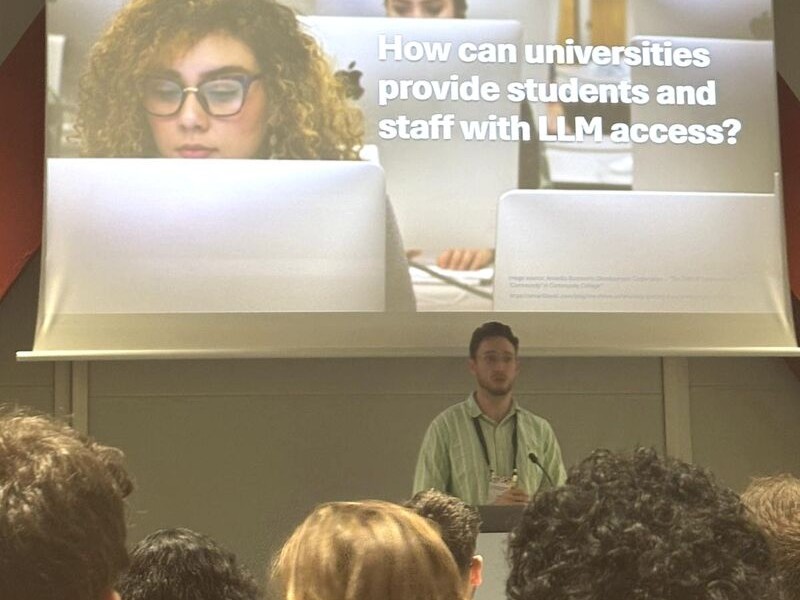

At CHI 2026, Leon Hannig presented the paper “Campus AI vs. Commercial AI: Comparing How Students and Employees Perceive their University’s LLM Chatbot vs. ChatGPT” (written by Leon Hannig, Annika Bush, Meltem Aksoy, Tim Trappen, Steffen Becker, and Greta Ontrup).

https://dl.acm.org/doi/10.1145/3772318.3790622

The study compares how users assess institutional AI systems in contrast to widely used commercial tools. It highlights how context, expectations, and trust differ depending on where and how AI is deployed–an increasingly relevant question as organizations integrate their own AI solutions.

Trust, certification, and perception

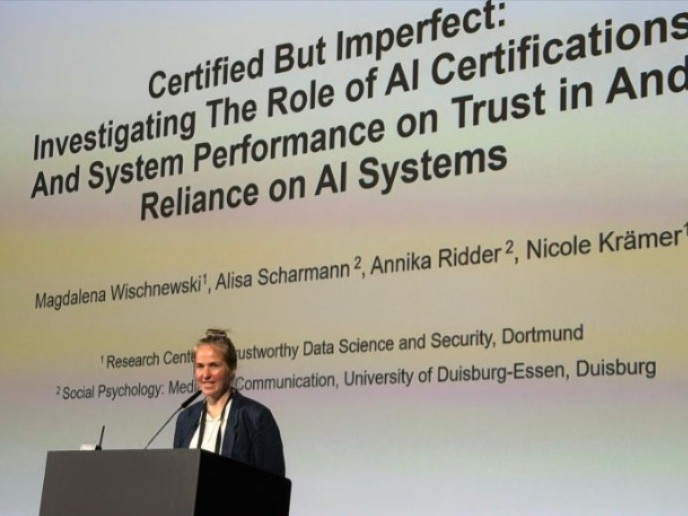

Research from the Chair of Social Psychology: Media & Communication, led by Prof. Nicole Krämer, further deepens the perspective on how people evaluate and trust AI systems in practice. At CHI 2026, two scholars from the Prof. Krämer’s chair, Nur Efsan Cetinkaya, PhD student, and Magdalena Wischnewski (TU Dortmund University), Postdoctoral Researcher in the group, contributed two papers that focus on the role of certification, system performance, and communication in shaping trust.

In the full paper “Certified AI System = Trustworthy? Exploring Expert and Lay User Perceptions and Needs Regarding AI Certification”, led by Sarah Abdelwahab Gaballah (RUB), together with Nur Efsan Cetinkaya, Magdalena Wischnewski and Martina Angela Sasse examined how both experts and non-experts interpret AI certification and what they expect from it. https://dl.acm.org/doi/10.1145/3772318.3791274

In the full paper “Certified But Imperfect: Investigating The Role of AI Certifications And System Performance on Trust in And Reliance on AI Systems”, Magdalena Wischnewski, together with Alisa Scharmann, Annika Ridder, and Nicole Krämer, investigated how certification interacts with actual system performance – and how certifications elevate user expectations beyond what the certification can guarantee.

https://dl.acm.org/doi/10.1145/3772318.3790983

Complementing this, the poster “Does How We Talk About LLMs Matter? Effects of Mentalistic and Mechanistic Language on User Perceptions”, by Magdalena Wischnewski and Dennis Nguyen, explored how different ways of describing AI systems influence how users perceive and evaluate them.

https://dl.acm.org/doi/10.1145/3772363.3798547

Connecting perspectives

Taken together, these contributions show how different strands of research at RC Trust complement each other. Questions of governance, interaction design, and user perception are closely intertwined when it comes to building trustworthy AI systems.

The strong presence at CHI 2026 underlines that this work is not only locally grounded but also part of an international research dialogue, and thus, contributing to how human-centred AI is understood and shaped today.

The next ACM CHI Conference on Human Factors in Computing Systems will take place from May 10–14, 2027, in Pittsburgh, USA, bringing the international HCI community together once again.

Category

- Publication

- Network

- Event

- Award

Author

Giulia Barbareschi, Leon Hannig, Sven Mayer, Jat Singh, Patrick Wilking, Magdalena Wischnewiski