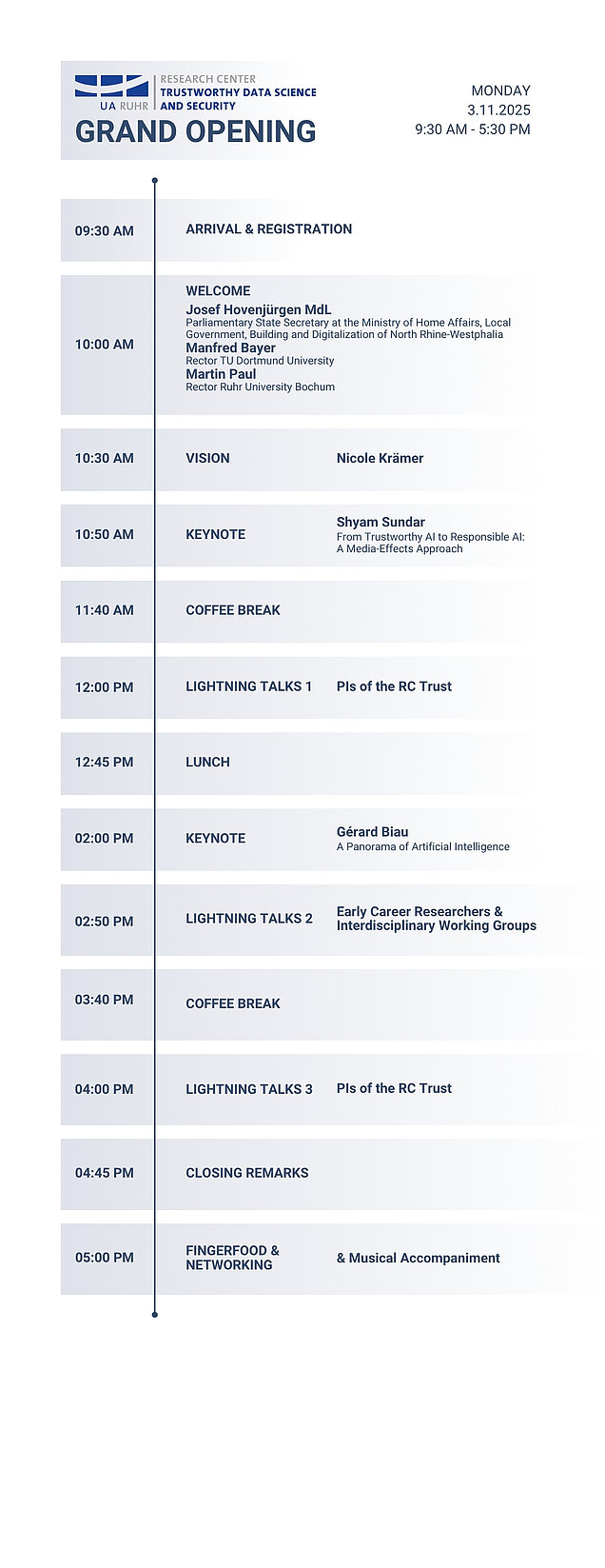

3 November 2025

Grand Opening of the RC Trustworthy Data Science and Security

On 3 November 2025, we will celebrate the official opening of the Research Center for Trustworthy Data Science and Security (RC Trust) at the Dortmunder U. After a successful consolidation phase, we are proud to present our center to the public – with an inspiring program and guests from academia, industry, and politics.

A special highlight will be our keynote speakers, who will provide diverse and insightful perspectives on Trust & AI:

Gérard Biau

Gérard Biau

A panorama of artificial intelligence

The Director of the Sorbonne Center for Artificial Intelligence in Paris will share reflections on the relationship between science, society, and trust in data-driven systems. As a leading mathematician, he plays a key role in shaping European AI research.

[Learn more] S. Shyam Sundar

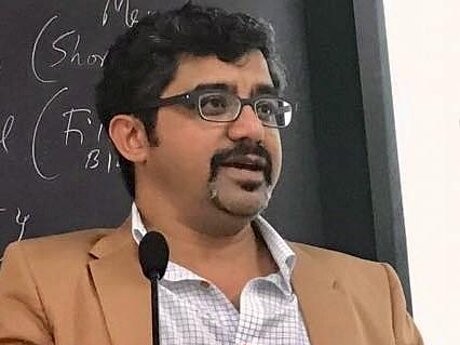

S. Shyam Sundar

From Trustworthy AI to Responsible AI: A Media-Effects Approach

Director of the Center for Socially Responsible AI at Penn State University (USA), is one of the world’s foremost scholars in media psychology. He studies how people perceive algorithmic decisions – and what social implications AI technologies entail.

[Learn more]The scientific program also includes presentations and demonstrations from several RC Trust researchers.

The event will start at 9:30 AM. The preliminary schedule is available here.

12:00 PM - 12:45 PM

Augmented Inclusion: Technology Opportunities for Fairer Societies

Co-designing technologies with marginalized communities offers unique opportunities to drive innovation and deliver social impact. In this talk I highlight real-world examples including virtual reality supporting gender-affirming mental health for LGBTQIA+ individuals, telepresence robots enabling inclusive hospitality work for people with disabilities, and electronic toolkits expanding creative participation of older adults to show how collaboratively designed technologies enhance individual abilities and boosts participation. I’ll conclude with an invitation to help shape a new generation of technologies that leaves no one behind.

[About Guilia]

How AI can corrupt human ethical behavior

As AI becomes part of our daily lives, a key question emerges: can machines corrupt our moral compass? My talk explores how AI shapes human ethical behavior. I outline four roles through which machines influence us—role models, advisors, partners, and delegates. Recent experiments show that AI advisors can be just as corrupting as humans, while AI delegates let people benefit from unethical choices without feeling guilty—a dangerous combination. I’ll close by highlighting which interventions, including some in the EU AI Act, may help prevent such risks and sketching a roadmap for making AI safer for society.

[About Nils]

Characterizing and Detecting Propaganda-Spreading Accounts on Telegram

Information operations, such as disinformation and propaganda, are present on messaging platforms such as Telegram, Discord, and WhatsApp, where moderation is limited and localised. We analysed over 17 million Telegram messages and uncovered two distinct networks of propaganda spreading accounts. To combat this, we developed an AI-based detector that leverages the way that the propaganda accounts reply to real users that achieves 97.6% accuracy, 11.6 percentage points above human moderators, and remains robust against evolving propaganda strategies.

[About Rebekah]

Building the Next Generation of Human AI Systems

Artificial intelligence is transforming how we design interactive systems, moving from tools to partners in everyday life. Our group investigates human AI systems that are trustworthy, adaptive, and aligned with human needs. Drawing on advances in physiological computing, generative AI, and human–robot interaction, we are building the next generation of human AI systems.

[About Sven]

Talking to alien intelligence: Social psychological views on human-AI-teams

In order for human-AI-teams to excel, humans need to trust the AI to the degree the system actually is trustworthy. However, various attributes of the system, such as anthropomorphized cues, might trick users into trusting. We will discuss against the background of empirical evidence how calibrated trust can be instilled and whether it is helpful to increase human understanding of system functioning by fostering the emergence of a theory of the artificial mind.

[About Nicole]

Dancing with Drones: How Humans Calibrate Trust for the Future of Work

Working with a drone is like learning to dance with a new partner - at first you don’t know their rhythm, sometimes they move too fast or disappear around the corner. Over time, trust emerges as you learn each other’s steps. Our research studies how human-drone interaction shapes trust, mental models, behavior, and intention to use, laying foundations for a future Theory of the Artificial Mind (ToAM).

[About Lisa]2:50 PM - 3:40 PM

What Does AI Really Learn?

Behind every impressive AI model lies a hidden process of compression: turning vast amounts of data into compact understanding. Our work shows that this compression happens in two ways: informationally, in what is remembered, and geometrically, in how concepts are arranged inside the network. These two forms of compression are not identical, and their subtle tension determines how well AI systems generalize, adapt, and sometimes fail. Recognizing this relationship is in our opinion key for building models that are both powerful and trustworthy. By linking abstract mathematics with practical performance, we move toward a science of why AI works.

[About Linara]

How does the public perceive AI? Findings from five countries

Technical definitions dominate the AI debate – but how does the public actually perceive AI? And what do people understand by ‘artificial intelligence’? And what are the consequences of this? This pilot study examines understandings of AI in five countries (UK, USA, India, South Africa, Australia; N = 2,500) and combines open-ended descriptions with quantitative measures such as trust. Participants' spontaneous descriptions of AI are diverse, ranging from tool-focused to human-like. The multi-method approach not only provides important insights for the planned 20-nation expansion, but also provides impetus for technical development, regulation and research. The findings reveal that the public's perception of AI is complex.

Intimacy by Design: Safeguarding Trust and Privacy in Chatbot Relationships

Social chatbots mirror the ways we form relationships with each other, creating interactions that can become so engaging that people find themselves sharing intimate thoughts, developing closeness, and even entering into romantic or sexual bonds. In our junior research group INTITEC we investigate which forms of intimacy of a romantic and sexual nature emerge and how they can be situated, as well as the privacy risks that accompany such interactions and how the EU AI Act may help to safeguard autonomy through informed use.

[About Lisa]

Trust at the Silicon Level: Human Factors in Hardware Security

Hardware security is not just a technical challenge — it’s also a cognitive one. My research examines how analysts reason as they reverse engineer chips and how they detect malicious modifications. By uncovering these human factors, we can design tools and methods that strengthen hardware security and provide the secure foundation that trustworthy AI depends on.

[About Steffen]

Private by Design - or Just Perception? Trust in Organizational AI

Many organizations are now introducing LLMs, such as OpenAI's GPT, as internal Chatbots, promising safer and customized AI support for their employees. In our research we explore the opportunities and the hidden risks of these LLM-as-a-Service integrations. Results show that the way AI is framed and embedded in organizations can shape employees' trust in it beyond its actual capabilities or risks.

[About Greta]

How statistics makes mud and ice usable for improving climate predictions

Valuable information about past climate changes is hidden below the surface, in thousands or millions of years old sediments and ice. To make optimal use of this data, we need to combine imprecise information on their age and the climate-dependency of their forming processes in robust statistical models. The resulting climate reconstructions allow us to test if climate models can reliably simulate Earth’s history and improve models that predict the future climate.

[About Nils]4:00 PM - 4:45 PM

Can we know what LLMs know?

LLMs are fast matching, and surpassing, humans across a wide range of analytical tasks. Yet, they fail in manners that are surprising given their capabilities. In this talk, I will share some ongoing work on reliably detecting, root-causing and mitigating these failure modes.

[About Bilal]

How we debunk the "Tale of Two Courts" in legal theory with state-of-the-art argument mining

A quick way to offend a judge is to call them "formalistic", yet research in legal theory claims that Central and Eastern Europe is the last bastion of formalism and that the predominant legal culture is formalist. In the Czech Republic, the "Tale of Two Courts" is a common wisdom, taking for granted that the supreme court is rigidly formalistic as it is inherited from the communist era, while the newly created supreme administrative court is non-formalistic. I will present our latest interdisciplinary research project where legal theorist and researchers in contemporary natural language processing and argument mining join forces to empirically challenge this status quo. Is the post-communist Central and Eastern Europe judiciary really stuck in the past?

[About Ivan]

Whose AI Is It Anyway?

AI is often deployed into people’s lives with little involvement from those affected. We explore ways of opening up design and governance so that systems can be shaped by the input of communities. Yet despite sounding promising, organisations often don’t know how to do this in practice or lack the incentives - a challenge we must address if AI is to reflect what we actually want, need, and value.

[About Emma]

Chocolate and Nobel Laureates: Why Correlation does not imply Causality

This talk highlights the pitfall of mistaking correlation for causation when training AI models on observational data. Using the well-known example linking chocolate consumption to Nobel laureates, we show how such curious associations point to the pervasive risk of spurious correlations in AI.

[About Alexander]

Filling in the Blanks: How Smart Imputation Helps Monitor Endangered Species (and Beyond)

Monitoring endangered species is vital, yet collecting complete data in the field is often too costly or impossible - field teams frequently return with complete records. We show how smart imputation - filling in missing observations in a clever way - can still yield reliable insights, opening the door to broader and more sustainable monitoring. More broadly, our research highlights that there is no single “best” method: one approach may excel at prediction, another at drawing safe conclusions, and another at producing official statistics. The key lesson is simple: aligning imputation methods with purpose ensures that missing data never compromise science, policy, or conservation decisions.

[About Markus]

Fooling Neural Networks

Neural networks have transformed our daily lives, powering technologies like voice assistants, personalized recommendations, and autonomous driving. However, despite their impressive capabilities, modern AI systems are surprisingly vulnerable to manipulation. This talk explores this critical risk through the lens of a timely topic: "chat control". Using the example of Neuralhash, a client-side scanning technology designed by Apple to combat Child Sexual Abuse Material, we will demonstrate how easily state-of-the-art AI can be tricked into misbehaving and highlight the dangers this poses when deployed at scale.

[About Daniel]Unfortunately, we have very limited space, so participation is by invitation only. Please use our online registration tool to confirm your attendance.

If you have any questions about the event or need further assistance, feel free to contact us at grand-opening@rc-trust.ai.

Nestled atop a historic brewery building, the Dortmund U blends iconic industrial heritage with a vibrant, modern cultural heartbeat—making it a truly inspiring venue for RC Trust’s Grand Opening. Here, innovation and creativity converge in a setting that immediately sparks curiosity, right at the heart of Dortmund.

Dortmund Hauptbahnhof (Hbf) is the city’s main rail hub, served by a wide array of long-distance, regional, and local trains, making it the primary arrival point for national and international visitors.

High-speed and intercity services including ICE (e.g., to/from Munich, Berlin, Hamburg), Intercity, Eurostar, and FlixTrain connect Dortmund with major German and European destinations.

Frequent Regional-Express (RE) and Regionalbahn (RB) services run across the Rhine-Ruhr area. Examples include NRW-Express, Rhein-Emscher-Express, Wupper-Express, Rhein-Weser-Express, Rhein-Hellweg-Express, RB 50 “Der Lüner,” among others.

S-Bahn and Regionalbahn lines like S1, S2, RB43, RB51 also serve Hbf.

A short 5-minute walk via Königswall and Brinkhoffstraße delivers you directly to the U‑tower.

Alternatively, exit Hbf and walk toward the cultural district—signage or local wayfinding will guide you seamlessly.

Lines U41, U43, U47, U45/U46 stop at Dortmund Hbf (underground), with U45/U46 changing at Westfalenhallen. These connections offer quick access from across Dortmund.

Address for navigation: Leonie‑Reygers‑Terrasse, 44137 Dortmund—or alternatively Otto‑Meinecke‑Straße for directions toward the nearby parking garages.

Recommended route: From the B1 (via A40 from Essen or A44 from Unna/A1), take the exit toward the city center and proceed along Ruhrallee → South Wall → Brinkhoffstraße → Otto‑Meinecke‑Straße.

Parkhaus Dortmund U (Brinkhoffstraße 30 / Otto‑Meinecke‑Straße 1)

Covered, with approximately 500+ spaces (including disabled spots) and a max height of ~2 m.

Tiefgarage Westendtor (Schmiedingstraße 25)